A TurtleBot4 Lite that explores unknown environments, builds real-time maps, and detects semantic hazards — fully autonomous, zero human input.

We built an autonomous ground robot capable of navigating entirely unknown indoor environments — no pre-loaded maps, no human guidance. It simultaneously maps its surroundings using SLAM, decides where to explore next using frontier-based planning, and detects semantic hazards including HAZMAT signs, fire, cliffs, and narrow corridors using a five-layer sensor fusion pipeline.

The motivation is search-and-rescue and industrial inspection — environments too dangerous for immediate human entry. The robot generates a complete annotated map so first responders know exactly what they're walking into before they enter.

From architecture proposal to scientific dossier — every deliverable completed across Spring 2026.

The complete Milestone 3 demonstration — live SLAM mapping, frontier selection, A* navigation, and semantic hazard detection on the TurtleBot4 Lite. Zero human input.

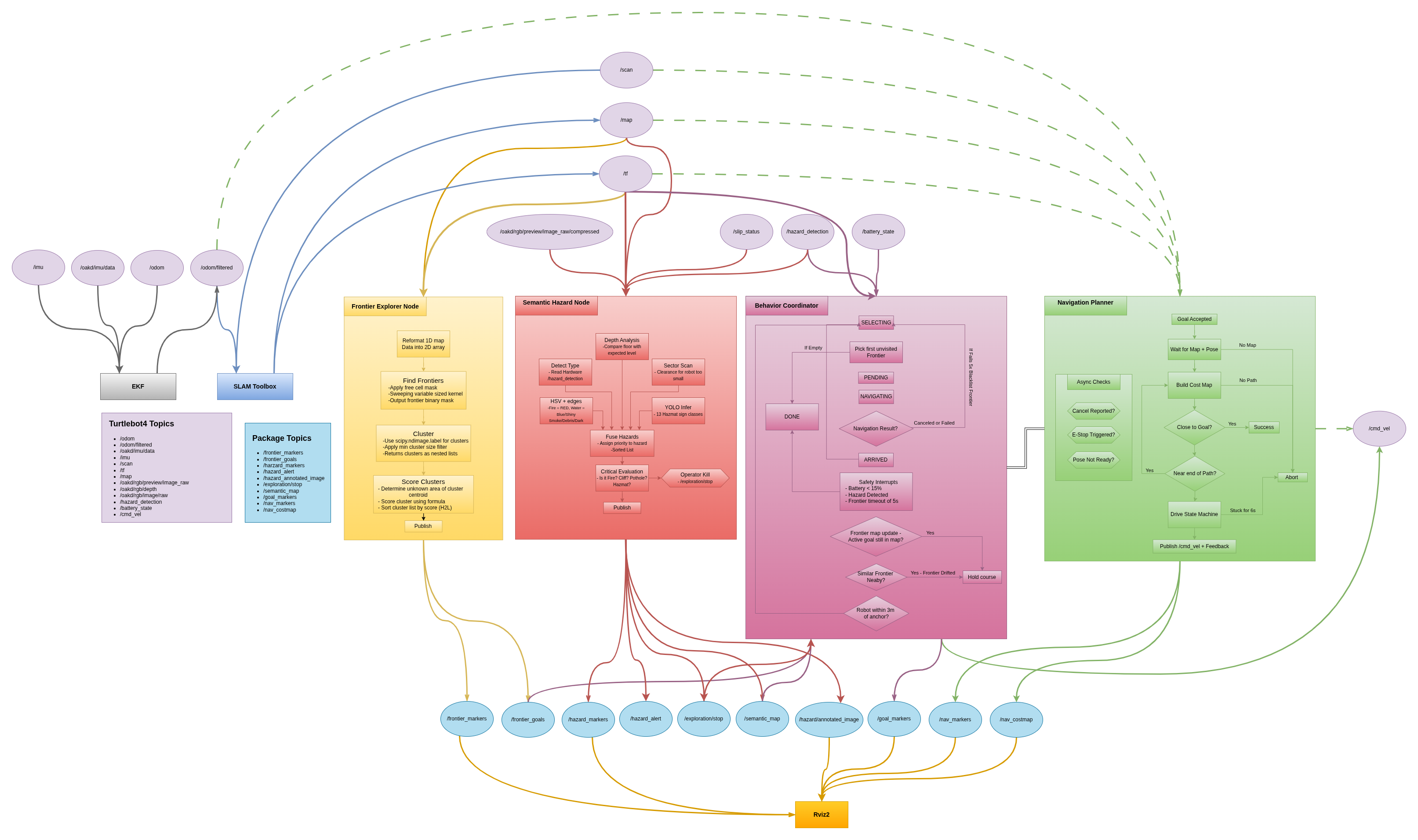

End-to-end Mermaid diagram of every ROS 2 node, topic, and data flow — from raw sensor input to annotated hazard map output.

mermaid_Final.png · Full ROS 2 node & topic graph · Click to zoom

Three engineers building autonomous robots from the ground up.

All code is open source and fully traceable to individual authors via git log.

| SHA | Author | Date | Message | Download |

|---|---|---|---|---|

| Loading… | ||||